Social Hacking: Assignment 6

My initial idea for this assignment was to look at absence and presence in private space using a thermal camera. Unfortunately, I ended up having to wait for someone to bring in the thermal camera so I scrapped that idea.

Another thing I’d been thinking about was reaction videos. I’m fascinated by the amount of reaction videos on YouTube and the performance of emotion. The intense interest on other’s part in reaction videos I think shows a human need to connect with others and validate one’s emotional responses. Like watching a movie in the theater, sharing an intense reaction with a person, even if it’s via watching them on a screen, connects us to each other regardless of age, gender, culture, geographic location.

This being my first time working with computer vision, I decided to pare down my concept to its basics and see how far I could get in writing/executing the code. I found out that Niccola was also looking at emotion analysis with the Google Vision API, so we teamed up and went about creating our own interpretations of how to execute actions based on the presentation of certain feelings.

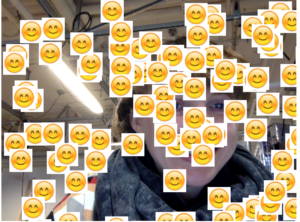

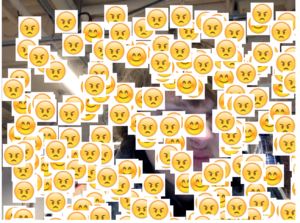

In a weird chain of ideas – involving sentiment analysis, Walmart employees and affect theory – I wrote a program that would cover the screen in emojis based on the emotion present. . I wanted hundreds of emojis to cover the persons’ face as a way of symbolizing how technology over simplifies our feelings and reactions.

The code worked well when it was only a couple of emojis on the screen, but as soon as they covered the entire canvas it seemed to screw with the sentiment analysis and pipe in the emoji faces as the thing to be analyzed instead of the facial reactions.

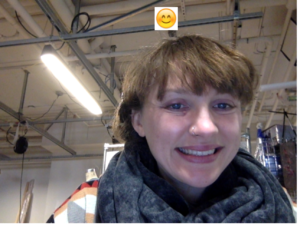

Here’s the code with 2 emojis:

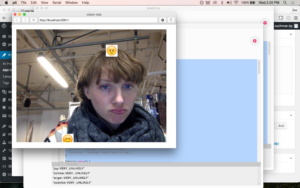

And the code I attempted with 4 (Google Vision allows you to analyze four sentiments – anger, sadness, joy, surprise):

Some screenshots to give an idea: